With time and effort, you can train your brain to make better decisions and overcome indecisiveness. Start by applying the...

Read article : Brain Based Strategies for Indecisiveness: Neuroscience of Better DecisionsCognitive Bias

The hidden errors in your operating system. Learn to identify and neutralize the mental shortcuts that lead to poor decision-making and strategic blind spots.

59 articles

Cognitive Biases Are Not Errors in Thinking — They Are the Architecture of Thinking

The standard framing of cognitive bias presents it as a defect — a systematic deviation from rational judgment that a sufficiently disciplined mind ought to correct. Behavioral economics has cataloged hundreds of these biases, each named and taxonomized as though they were bugs in otherwise functional software. Twenty-six years of working with the neural substrates of decision-making have shown me something the taxonomists routinely miss: cognitive biases are not errors layered on top of an otherwise rational system. They are the system. They are the computational architecture the brain uses to process an environment that delivers far more information per second than any prefrontal circuit can evaluate consciously.

The human brain receives approximately 11 million bits of sensory data per second. The prefrontal cortex — the seat of deliberate, effortful reasoning — can process roughly 50 bits per second. That is a compression ratio of more than 200,000 to 1. The brain solves this problem the only way any information-processing system can: by building shortcuts. Heuristics. Pattern-matching algorithms calibrated against prior experience that generate rapid, energy-efficient outputs without requiring the full analytical stack to run on every input. Those shortcuts are cognitive biases. They are not deviations from the brain’s intended function. They are the intended function, operating exactly as designed.

This distinction matters enormously — not as an intellectual exercise, but as a practical one. If biases are errors, the intervention is education: teach people the error, and they correct it. If biases are architecture, the intervention is fundamentally different. You cannot correct architecture by naming it. You recalibrate it by engaging the specific neural circuits that generate it, under the specific conditions that permit those circuits to update. Everything I do with high-capacity individuals navigating consequential decisions begins with that architectural understanding.

Dual-Process Architecture: Where System 1 and System 2 Actually Live in the Brain

The dual-process framework — popularized as System 1 (fast, automatic) and System 2 (slow, deliberate) — captures something real about how the brain allocates cognitive resources. But the popular version treats these as metaphors. They are not metaphors. They are distinguishable neural circuits with identifiable anatomical substrates, and understanding where they live changes what effective intervention looks like.

System 1 processing is implemented primarily through subcortical structures and their rapid feed-forward connections. The amygdala evaluates threat and emotional salience in as few as 120 milliseconds — well before conscious awareness. The basal ganglia, particularly the striatum, execute learned behavioral programs and pattern-matched responses, drawing on reward history and procedural memory stored across millions of repetitions. The anterior insula generates gut-level interoceptive signals that arrive as intuition, certainty, or unease — somatic markers that influence decisions before the prefrontal cortex has finished constructing the problem. These structures operate in parallel, beneath conscious access, and their outputs arrive as feelings, impulses, and certainties rather than as reasoned conclusions.

System 2 processing depends on the dorsolateral prefrontal cortex (dlPFC), the anterior cingulate cortex (ACC), and frontoparietal networks that support working memory, attentional control, and deliberate evaluation. These circuits are metabolically expensive, bandwidth-limited, and slow relative to the subcortical systems they are meant to supervise. They fatigue measurably — prefrontal glucose consumption increases under sustained cognitive load, and regulatory capacity degrades as the day progresses. This is not a metaphor for willpower depletion. It is cellular metabolism.

The critical insight is this: the vast majority of cognitive biases originate in System 1 circuitry — the amygdala, striatum, and insula — and they execute before System 2 has the opportunity to intervene. The prefrontal cortex does not decide whether to apply a bias and then apply it. The bias has already been applied. The amygdala has already weighted the threat. The basal ganglia have already selected the pattern-matched response. What the prefrontal cortex receives is a processed output — a conclusion dressed as perception — and its job is to evaluate, endorse, or override that conclusion after the fact. Understanding this temporal sequence is essential for understanding why most approaches to bias correction fail.

Confirmation Bias Is a Prediction-Error Management Strategy

Of the hundreds of documented biases, confirmation bias is the most pervasive and the most consequential. The standard definition frames it as the tendency to seek, interpret, and remember information that confirms existing beliefs while ignoring contradictory evidence. That description is accurate but superficial. It describes what happens without explaining why the brain does it — and the why changes everything about how to address it.

The brain operates as a Bayesian prediction engine. It does not passively receive information and then form beliefs. It generates predictions — probabilistic models of what will happen next — and then compares incoming sensory data against those predictions. When data confirms the prediction, the system runs efficiently: minimal energy expenditure, minimal updating required. When data contradicts the prediction, a prediction error fires — a metabolically costly signal that demands the brain either update its model or find a way to resolve the discrepancy without updating.

Confirmation bias is the brain choosing the second option. Updating prediction models is expensive. It requires prefrontal engagement, working memory allocation, and the dismantling of associative networks that may have been built over decades of experience. The brain has a strong metabolic incentive to preserve existing models whenever the cost of updating exceeds the perceived benefit — and in most cases, particularly for emotionally weighted beliefs, the cost-benefit calculation tips decisively toward preservation. This is not laziness. It is energy economics running at the neural level.

I observe this mechanism daily in individuals whose professional success depends on accurate judgment but whose personal belief systems are organized around predictions calibrated in environments that no longer apply. The same executive who updates market models in real time based on contradictory data will dismiss contradictory evidence about a relationship pattern, a leadership style, or a self-assessment — because the personal models carry emotional weight that multiplies the metabolic cost of updating. The bias is not consistent across domains. It is proportional to the emotional investment in the prediction model, and that proportionality is governed by amygdala-prefrontal coupling that varies by domain, by context, and by individual neural architecture.

Negativity Bias and the Amygdala’s Evolutionary Weighting System

The brain does not treat positive and negative information symmetrically. Negativity bias — the tendency to weight negative stimuli more heavily than equivalent positive stimuli — is one of the most robust findings in cognitive neuroscience, and its neural substrate has been precisely mapped. The asymmetry originates in the amygdala, which responds to threatening or aversive stimuli with greater activation amplitude, faster processing speed, and more durable memory consolidation than it allocates to rewarding or neutral stimuli.

This is not a design flaw. It is an evolutionary calibration with impeccable survival logic. In ancestral environments, the cost of missing a threat was death. The cost of missing a reward was a missed opportunity. The asymmetry in consequences produced an asymmetry in neural architecture: the amygdala developed a processing bias toward negative information that persists across every modern context, whether or not the threat is lethal. A critical email activates the same amygdala circuitry that once responded to predators — not at the same intensity, but through the same architectural pathway, with the same weighting bias.

The research quantifies this asymmetry with precision. Psychologist John Cacioppo’s work established that negative stimuli produce greater electrocortical response than equivalently intense positive stimuli. Rick Hanson’s synthesis of the neuroscience literature estimates a roughly 3:1 negativity-to-positivity ratio in attentional capture and memory encoding. Three positive experiences are required to neurologically offset one negative experience of equivalent intensity — not because people are pessimistic, but because the amygdala allocates processing resources with a survival-calibrated weighting function that no amount of positive thinking overrides at the architectural level.

What this means in practice — in the lives of the individuals I work with — is that the negativity bias is not a thinking problem amenable to cognitive flexibility and thought pattern retraining alone. It is a subcortical weighting algorithm that runs before the flexible thinking systems have access to the data. Recalibrating it requires engaging the amygdala’s threat-valuation circuitry directly, through mechanisms that operate at the speed and depth where the bias is actually applied.

The Dunning-Kruger Effect Has a Neural Substrate — and It Is Not Stupidity

The Dunning-Kruger effect — the observation that individuals with low competence in a domain systematically overestimate their ability while experts systematically underestimate theirs — has been reduced in popular culture to a joke about unintelligent people being too unintelligent to know it. That framing misses the neuroscience entirely. The effect is not about intelligence. It is about metacognitive circuitry — and specifically, about the prefrontal infrastructure required to accurately evaluate one’s own cognitive performance.

Metacognition — the brain’s capacity to monitor and evaluate its own processing — depends on the anterior prefrontal cortex (aPFC) and its connections to the dorsomedial prefrontal cortex and the posterior parietal regions involved in self-referential processing. These circuits develop with expertise. As competence in a domain increases, the metacognitive networks associated with that domain become more refined, more connected, and more capable of generating accurate self-assessments. In the early stages of learning, those networks are underdeveloped — not because the individual is unintelligent, but because the metacognitive infrastructure is domain-specific and requires repeated calibration against feedback to generate reliable self-models.

Overconfidence bias at the novice end of the spectrum reflects the absence of a calibrated metacognitive model — the brain defaulting to its general self-assessment rather than a domain-specific one. At the expert end, the systematic underestimation reflects the opposite: a highly developed metacognitive system that is acutely aware of the complexity it has not yet mastered, generating conservative estimates precisely because it can see the full scope of what remains unknown. In my work with executives navigating unfamiliar domains — new industries, new relationship dynamics, new neurological territory — I observe this asymmetry directly. The individuals most dangerous in their confidence are those operating with sophisticated general intelligence but underdeveloped domain-specific metacognition in the area where they are making consequential decisions.

Why Debiasing Training Fails — The System 1 Problem

Corporations, universities, and professional development programs have invested substantially in debiasing interventions — workshops, training modules, and awareness campaigns designed to reduce the influence of cognitive biases on organizational decision-making. The evidence for their effectiveness is discouraging. A 2019 meta-analysis published in the Journal of Experimental Psychology found that awareness-based debiasing interventions produce minimal lasting behavioral change. People learn the names of biases. They can identify them in case studies. They continue to exhibit them in their own decisions at nearly unchanged rates.

The neuroscience explains why. Debiasing training targets System 2 — the prefrontal cortex, conscious reasoning, deliberate evaluation. It teaches people to recognize biases cognitively, to apply corrective frameworks intellectually, to engage their analytical capacity when they suspect a bias is operating. The problem is that biases do not operate in System 2. They originate in System 1 — in the amygdala’s threat weighting, the striatum’s pattern matching, the insula’s interoceptive certainty signals. By the time System 2 is engaged, the biased output has already been generated and is already shaping perception, emotion, and behavioral impulse.

Teaching someone that confirmation bias exists does not prevent their anterior cingulate cortex from generating a conflict signal when they encounter evidence that contradicts a strongly held belief. Teaching someone about negativity bias does not reduce the amygdala’s activation amplitude in response to ambiguous social cues. Teaching someone about the anchoring effect does not prevent the striatum from encoding the first number encountered as a reference point for subsequent evaluation. The training addresses the wrong layer of the system. It is like teaching someone the physics of turbulence and expecting it to eliminate their fear of flying — the intellectual understanding operates in a circuit that does not govern the emotional response.

This is the central limitation I see in organizations that invest heavily in cognitive bias awareness while continuing to make the same biased decisions. The individuals are not failing to apply what they learned. They are applying it — in System 2, after System 1 has already committed to its output. The corrective layer is running downstream of the layer that generates the bias, and the downstream correction is consistently overmatched by the upstream signal. Effective recalibration requires reaching System 1 directly — engaging the subcortical circuits where biases originate, under conditions that permit genuine pattern recognition and cognitive automation updating rather than intellectual overlay.

Recalibrating Bias at the Neural Prediction Level — Dr. Ceruto’s Approach

My methodology for working with cognitive bias begins where most approaches stop: at the layer of neural architecture where biases actually operate. This is not a matter of adding a new intellectual framework on top of an existing system. It is a matter of accessing and modifying the prediction models, threat-weighting algorithms, and pattern-matching programs that generate biased outputs before conscious awareness begins.

The first step is mapping. Every individual arrives with a unique bias profile — a specific constellation of overweighted predictions, miscalibrated threat responses, and domain-specific metacognitive gaps that produce their characteristic decision errors. An executive who catastrophizes interpersonal ambiguity but navigates financial uncertainty with calibrated precision has a bias profile that is domain-differentiated, not domain-general. Mapping that profile — identifying which biases operate in which domains, which prediction models are miscalibrated, and which subcortical circuits are driving the outputs — is the prerequisite for intervention. Generic bias awareness treats every individual as though they have the same architecture. They do not.

The second principle is real-time intervention. Prediction models update most efficiently when the prediction is active — when the brain is in the process of generating the biased output. This is when neural plasticity is highest, when the relevant circuits are firing and available for modification. Real-Time Neuroplasticity™ is designed to operate in this window: identifying the moment a prediction model is executing, introducing calibrated prediction errors that the system cannot resolve through its existing model, and facilitating the model update that awareness-based training cannot reach. The bias is not analyzed retrospectively. It is intercepted live.

The third principle is calibration rather than elimination. A brain without cognitive biases would be a brain incapable of functioning in a complex environment — processing every decision through full prefrontal deliberation would produce decision paralysis, not optimal judgment. The objective is not to eliminate the heuristic architecture. It is to recalibrate it — to update the prediction models that are generating maladaptive outputs while preserving the computational efficiency that makes rapid, effective decision-making possible. The goal is a brain that applies its shortcuts accurately, not a brain that has no shortcuts.

For individuals whose cognitive biases are producing consequential errors — in leadership decisions, relationship patterns, self-assessment, or risk evaluation — and who recognize that knowing about the bias has not changed the bias, schedule a strategy call with Dr. Ceruto. I assess which prediction models are miscalibrated, which circuits are generating the errors, and what recalibration at the architectural level would require for your specific neural profile. The conversation begins there because the assessment is the intervention’s foundation — and getting the architecture right is what separates genuine change from intellectual awareness that produces no behavioral shift.

What 59 Articles on Cognitive Bias Explore — and Why the Depth Is Necessary

This section of MindLAB Neuroscience encompasses 59 articles examining cognitive bias from the level of neural mechanism rather than behavioral catalog. The depth is deliberate. The popular coverage of this subject — lists of biases with definitions and examples — has been thoroughly covered by every behavioral economics blog, psychology website, and AI overview on the internet. That content serves a purpose, but it does not serve the purpose of genuine change. Understanding that you have a confirmation bias does not recalibrate the prediction-error management system that generates it. Knowing that negativity bias exists does not modify the amygdala’s threat-weighting algorithm.

The articles collected here approach cognitive bias from the direction that matters for individuals who are not looking for definitions — they are looking for understanding that translates into different outcomes. The neuroscience of dual-process architecture and its implications for decision quality. The relationship between cognitive distortions and systematic thinking errors and the broader landscape of biased prediction. The metacognitive circuitry that governs self-assessment accuracy. The specific conditions under which prediction models can genuinely update versus the conditions under which intellectual overlay masquerades as change.

Every article in this section is built on the premise that cognitive biases are not character flaws, intellectual failures, or problems to be solved through awareness. They are neural architecture — computational solutions the brain developed for problems it was once required to solve, still running in environments where the original problems no longer apply. The science of how that architecture operates is the prerequisite for the science of how it changes. These articles provide both.

Latest Articles

Your brain is a rebel waiting to be unleashed. Neuroplasticity isn't just scientific jargon—it's your ticket to a mental revolution....

Read article : Brain Flex: Unlock Neuroplasticity for Personal GrowthUnleash the Power of Emotional Intelligence in Leadership In our hyper-connected era, emotional intelligence (EQ) has emerged as a game-changing...

Read article : Emotional Intelligence Pitfalls: Avoiding Common Missteps for Effective LeadershipHave you ever found yourself spiraling into a whirlwind of worst-case scenarios, even when faced with seemingly mundane situations? If...

Read article : Master Your Mind: Address Catastrophic ThinkingOvergeneralization is a cognitive distortion where individuals apply a single negative experience to all future situations. This thinking pattern can...

Read article : Examples of Overgeneralization: The Neural Pattern That Locks In “Always” and “Never”Unlock the secrets of how neurons work and their role in personal and professional development. Dive deep into the neuroscience...

Read article : The Hidden Orchestra: How Neurons Work to Shape Our LivesDiscover how thinking dispositions—such as open-mindedness, curiosity, and truth-seeking—shape decision-making, fuel personal growth, and set the foundation for true innovation...

Read article : Thinking Dispositions: The Secret Drivers of Exceptional Reasoning; Leadership; and GrowthDive into the neuroscience of contentiousness to discover how evolutionary biology, psychological frameworks, and social dynamics wire us for conflict,...

Read article : The Neuroscience of Contentiousness: What Your Brain Is Really DoingUnlock how alpha vs beta traits are rooted in brain structure and chemistry. This deep dive reveals the neuroscience, evolutionary...

Read article : Alpha vs Beta Traits Neuroscience: Power, Connection, and the BrainEver wondered why you feel instantly drawn to some people but not others? The neuroscience of sexual attraction reveals that...

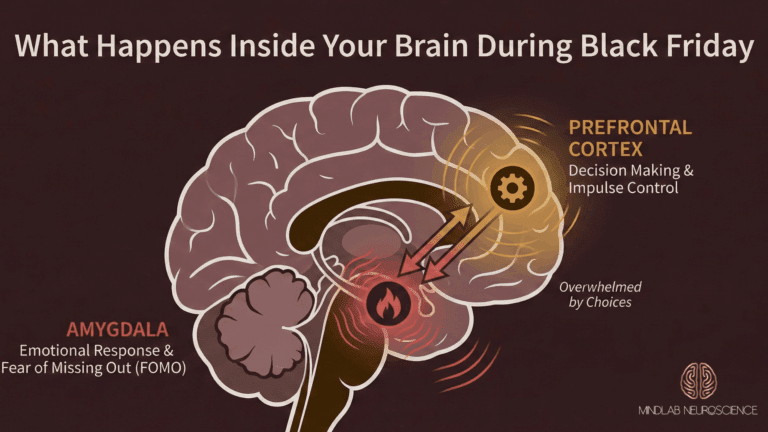

Read article : The Neuroscience of Sexual Attraction: Why Your Brain Chooses Who You DesireYour brain's threat and reward systems activate during Black Friday. Here's how neuroscience-based coaching can rewire your impulse patterns for...

Read article : Black Friday Brain: Neuroscience Behind Impulse PurchasesTime optimism can wreck your schedule even when you’re competent. Discover why your brain misinterprets time at work and apply...

Read article : Time Optimism at Work: Why Smart People Underestimate Time and How to Resolve It